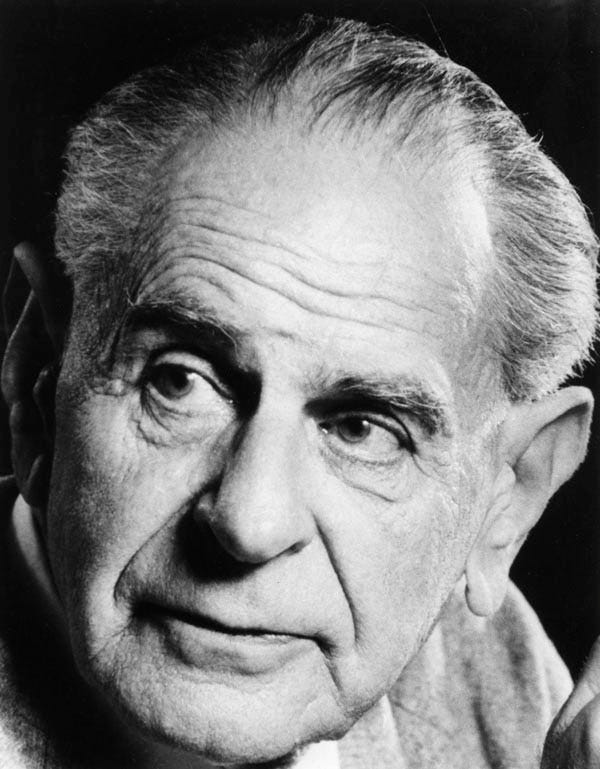

How do you distinguish between science and pseudoscience? Karl Popper, a philosopher of science, thought hard about this problem and arrived at a principle called falsifiability.

Scientific theories are inherently falsifiable. That is to say, they are amenable to refutation. No amount of evidence confirming a scientific theory proves its validity; on the contrary, repeated and verified observations that don’t confirm the theory can take it back to the proverbial drawing board. Theories are not accepted because they are “True” in an absolute sense, but because the predictions they make haven’t been falsified thus far.

Scientists make specific predictions based on their theory, and in doing so, they provide the world with the exact tools needed to dismantle their work. They run the risk of losing face and intellectual credibility at any point. (On the other hand, the more specific and riskier the predictions, and the longer they remain unfalsified—the glory of unravelling something truly remarkable!)

Pseudoscience, on the other hand, seeks only confirming evidence. Think of stuff like astrology, certain conspiracy theories, or diatribes against modern medicine. They almost always sound plausible because they are designed to be “watertight.” However, proponents of these don’t provide anything falsifiable. There’s loads of contrary evidence, of course! But in the absence of well-defined falsifiability criteria, such evidence is simply “absorbed” or argued away:

“The data doesn’t show it because the liberal elites are suppressing the ‘real’ results,”

“the entire study regarding the efficacy of vaccines is a plant by Big Pharma to protect their profits,”

“Homeopathy works, but you don’t trust the results only because it’s not approved by the West,” and so forth.

Because these claims never specify what would count as a “failure,” they run no risk. They conveniently seize every feeble corroboration only to gloat. And, to be clear, the question here is not one of truth, but that of logic—indeed, liberal elites might have their political biases just as Big Pharma is primarily motivated by commercial gains. But those do not automatically validate these claims. They have to stand the test of falsifiability independently.

This is where the logic meets temperament. Falsifiability is, at its heart, an act of humility. To be scientific is to admit, “I could be wrong, and here is exactly how you can prove it.” It is full of doubt, caveats, and accountability. Does science—and more than science, the industry that is built on the foundation of science and technology—always show intellectual humility and accountability? Of course not. But that is at least part of its philosophy. While individual actors or corporations may fail, the scientific process—rooted in peer review and the constant threat of refutation—provides a level of scrutiny that pseudoscience avoids by design. Pseudoscience is defined by arrogance; it starts with the conclusion and works backward, ensuring it is never vulnerable to reality.

Beyond the realm of formal science, this “scientific temper” is a powerful tool for our civic and cultural discourse—whether on social media or in the real world. Logic ought to trump emotion, or at the very least, provide a necessary check on it. In policy-related discussions, we often see positions taken based on one’s political alignments rather than the substance of a proposal. A policy is labeled “pro-people” or “anti-people” based on who proposed it, rather than a rigorous analysis of its mechanics.

Similarly, in the debates around history and linguistics, we encounter claims of “primacy,” “antiquity,” or “superiority” of certain cultures or languages. Such claims frequently function as social paradigms—mental models so central to our identity that we would rather explain away a mountain of evidence than admit the model has entered a state of crisis. These claims are frequently patently unfalsifiable, if not already proven false by existing evidence.

Why do we gravitate toward these “watertight” claims in history or politics? Why do we often take a black-and-white position or remain obsessed with our antiquity and a purported “glory of the past”? It is often because they provide an identity-based safety net. Logic and scientific temper, on the other hand, are discomfiting; they induce doubt and offer no consolation or comfort.

In an echo chamber, you are embraced for toeing the group’s line and pounced upon if you find errors or even ask questions. On the other hand, in a critical peer review, you are rewarded for finding weak points, but also subject to criticism for illogical claims—however emotionally appealing they might sound.

There is a profound ethical dimension to this. Illogic not only leads to bad science; it can breed a society that is intellectually dishonest. That, in turn, hurts democracy and any hope for social justice. Language has a critical role to play here: if our language of discourse is designed to be unfalsifiable—playing on emotions and using loaded, ambiguous terms that lack clear definitions—the quality of our discourse suffers. When our engagement shrinks to fragments and social media polls, we lose the vocabulary of doubt that is essential for progress.

What’s the antidote? There are no silver bullets. But scientific temper is not a silo reserved for those who work in the field of science or technology; it must be a part of every discipline and every conversation. Understanding concepts like: thinking from first principles, falsifiability, statistical evidence, repeatability, cognitive biases, and logical fallacies aids all of us in this endeavour.

In an age where social media algorithms act as a “protective shield”, filtering out the "black swans" that might challenge our worldview, maintaining a scientific temper is a revolutionary act and an important contribution to society. It requires us to consciously break out of our inertia and seek evidence we have been conditioned to ignore. It moves us away from pre-packaged certainties and toward a more honest, accountable engagement with the world.

You might also find this interesting

False Dichotomy and the Argumentation Crisis

This post is an English translation (with an updated conclusion section) of an essay originally written in Kannada by Sanket Patil. If you are interested in reading the original essay, you can find it here. An initial version of the Kannada essay was published in the September edition of

The social media algorithms as 'protective shield' metaphor really hits different when you think about how they literaly curate away contradictory evidence. What strikes me is how Popper's falsifiability becomes almost radical in today's discourse - asking someone to define what would change their mind feels like a confrontational act now. I've seen policy debates where both sides refuse to specify failure conditions, wich just turns everything into performative team sports. The humility piece is what we've lost most.